I have a mental model for AI. I find the model useful for understanding deeply complex, mathematical techniques as well as abstract, high-level coding in AI programs. If you’ve never really understood what a “tensor” was, or how “TensorFlow” got its name, this post is for you. To me, “tensors” in AI are as important as “records” in the database world.

Disclaimer

A quick note on mental models before I start. I disagree with naysayers who dismiss analogies to brain function. I personally find these analogies very helpful. After all, they inspired original research into AI back in the 1940s. Further, today’s deep learning systems borrow a lot from biological architectures of the brain. With that, onward!

Tensors and Graphs

Let’s look inside Bert’s head, shall we?

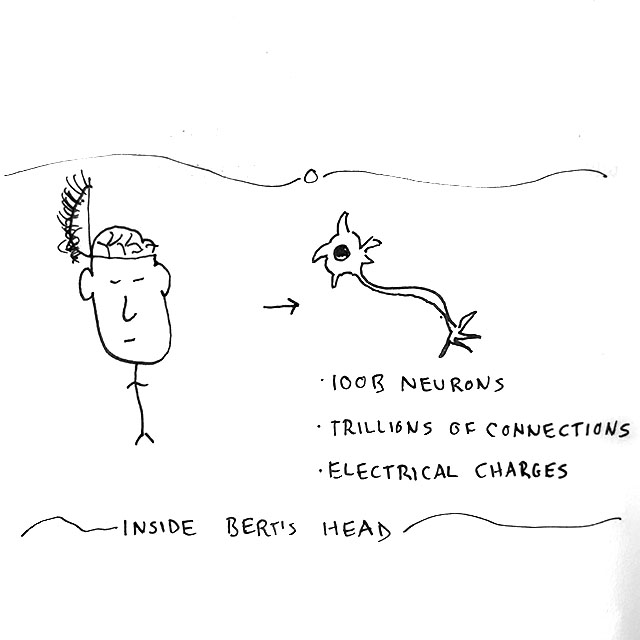

The top, gray layer of Bert’s brain is about the size and thickness of a large dinner napkin, crammed and crunched into a skull. The nooks and crannies are filled with tiny neurons, whose cells look roughly like the figure below (not drawn to scale).

Inside Bert’s head you’ll see layers of neurons. If we separated them like we did in 7th grade biology class, we’d see a rich Baklava of gray layers all connected by sinews of neurons.

Tracing these threads in Bert’s nervous system, we’d see the neurons connecting directly to Bert’s eyes for seeing, ears for hearing, and so forth. Bert thinks or “computes” by detecting subtle chemical charges from his senses, propagating charges through his vast, intricate network of neurons.

At some point, the visuals from Bert’s eyes will fire enough neurons, they fire others, and so on. Then Bert feels a certain tickle in his brain, bellowing

“Cat!”

Now for some science.

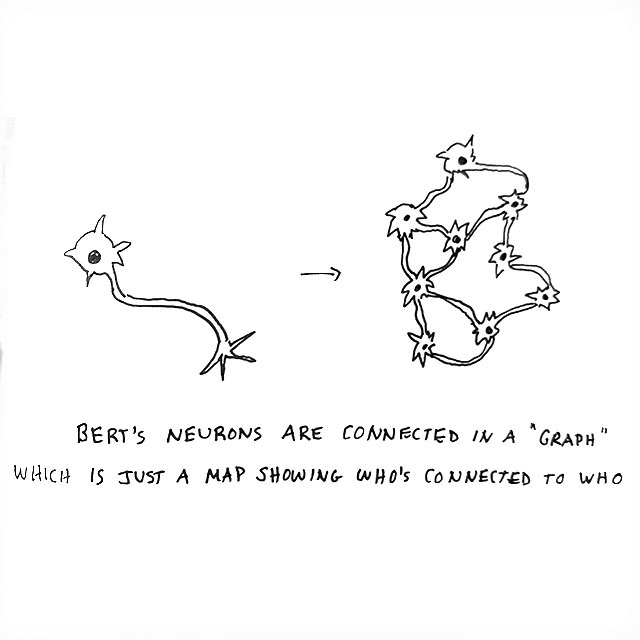

Let’s draw a map of Bert’s neurons, similar to how we’d draw a map of airplane flights. The bodies of the neurons are our “cities,” and the long tubes that connect them are our “flights.” In AI we call this a “computational graph.” Let’s graph 9 of Bert’s neurons.

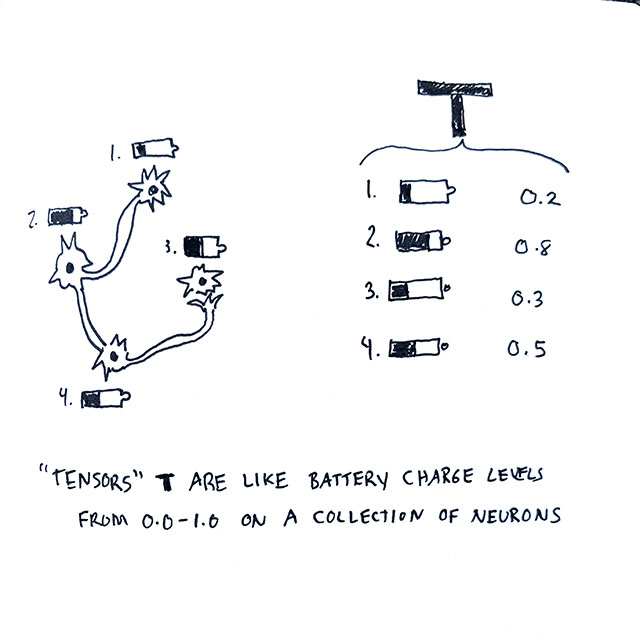

Neurons in Bert’s head carry a tiny electrical charge. Imagine if we could see this charge as a battery level. We can write down the battery levels for all 9 neurons in the graph. We’ll use 0.0 to mean a dead battery, 1.0 to mean a full battery. 0.50 is half-charged, 0.25 is a quarter charge, and so on. You get the idea.

The list of battery levels is called a “tensor.” We can be super fancy here, and use “higher order” math:

-

We can list battery levels in a column in Excel, and call it a “1-dimensional” or “1-d” tensor. 1-d is just a vertical list.

-

We can put battery levels in a spreadsheet, making sure to fill every row and column in a nice rectangle, without missing a spot. That’s a “2-d” tensor. 2-d is like a stock chart, with two dimensions “time” and “stock price.”

-

We can copy that sheet to another, and another. Mess the numbers up a bunch, but still keep them between 0.0 and 1.0. Then we’d have sheets of tensors, or a “3-d” tensor. A common 3-d tensor is a digital image, one sheet each for the pixel values of red, green and blue.

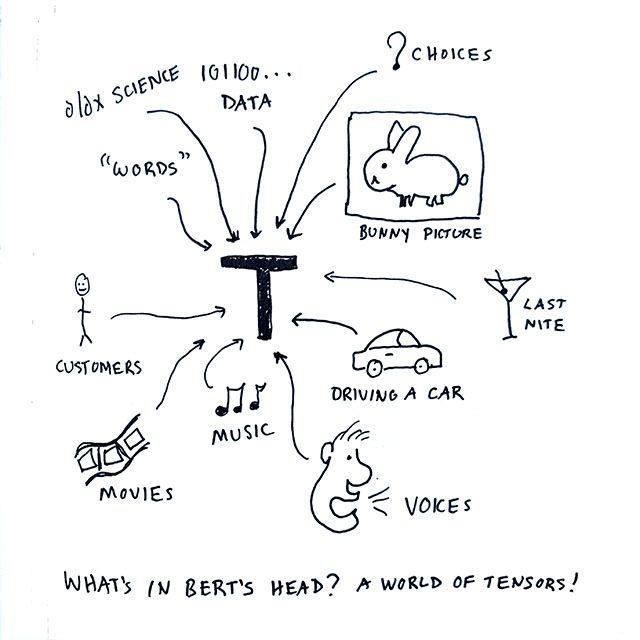

Bert represents everything as a tensor. Imagine all the amazing things Bert holds in his head as tensors. Instructions for driving a car, the taste of his martini from last night, a picture of a bunny, or words he’s reading are all… tensors.

Tensor Processing

Bert’s brain is a tensor processor. Tensors in, tensors out. Tensors flow to think. Interestingly enough, the most popular tool among AI nerds today is… Tensorflow.

We can reframe human thinking as tensor activity. We map sensory experience into tensors. We flow those tensors through the brain’s graph, producing more tensors. Our bodies interpret those tensors by moving, speaking, writing, drawing or doing burpees.

Here are some examples:

-

Bert can hear someone speak (a tensor of frequencies over time) and write down words he’s heard (a tensor representing words in a sequence). That’s called automated speech recognition.

-

Bert can watch (image tensors over time) and listen (sound tensors over time), then figure out how to turn the steering wheel (a “control” tensor telling us the angle of turn and how fast), press the brake (another control tensor), or play with the turn signal (a logical on/off tensor). That’s called autonomous driving.

-

Bert can see a description of a bunny (a sequence of word tensors) and then draw that bunny (generating an image tensor). That’s often done by generative adversarial networks that specialize in creating big tensors out of smaller ones.

-

Bert can see a picture of a bunny (an image tensor) and label it with the word “b u n n y,” (a label tensor). That’s called logistical regression.

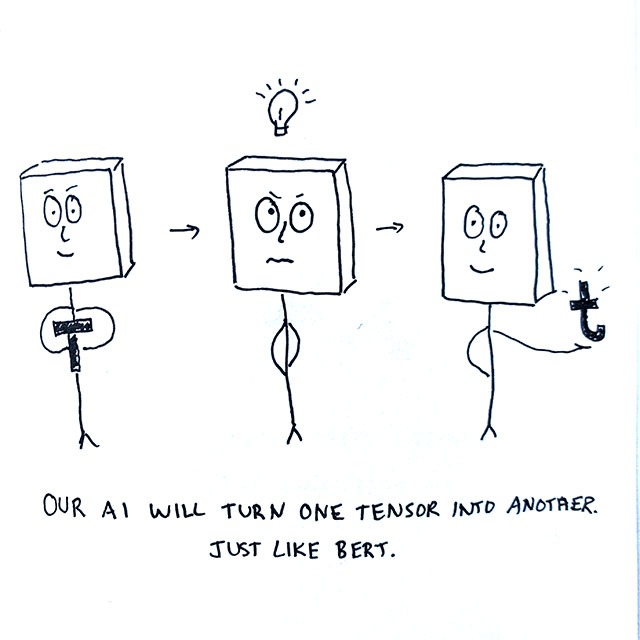

A modern, artificial intelligence captures this approach in software. Tensors come into our machine, they flow through a graph, then other tensors flow out. We use computers to turn normal everyday things into tensors, hand them to an AI, then interpet output tensors just like our bodies do.

Shockingly, this works. Not only does it work, it works extremely well at billions of operations per second (e.g., at the speed of your phone). The performance is super human. This major breakthrough in 2012 led to the world’s Nobel prize in computing!

Tensor Models

The computational graphs consist of artificial neurons, another name for math equations with tensor values. The most popular neuron multiplies input values by constants called weights, then adds constants called biases, to yield outputs. You may recall this equation of a line,

y = m * x + b

The input tensor is “x.” The output tensor is “y.” The value “m” is a weight. The value “b” is a bias. You may have used this math with stock prices and inventory levels, often using single numbers. Computers do the same thing. They just use use more dimensions. A lot more.

A major advance in AI introduced a radically simple “fire” macro, like the @macro’s you’ve used on a spreadsheet, to mimic neurons. Instead of using the output tensor Y, we can send the result of “firing” an artificial neuron like this:

y = @fire(m * x + b)

The graph plus all of its constant valus – m, b, and @fire – are collectively called an AI “model.” The “model” tells a computer how to give us an output tensor, y, when given an input tensor “x.”

Learning

Figuring out what to use for “m” and “b” is the process called machine learning. The computer is learning the values that cause it to behave correctly, turning input tensors into the outputs we expect.

Computers do this by guessing. They usually start with random values, all slightly above 0. If they guess too low, they tweak “m” and “b” a bit so the answer nudges higher. If their guess is too high, they do the reverse, nudging lower.

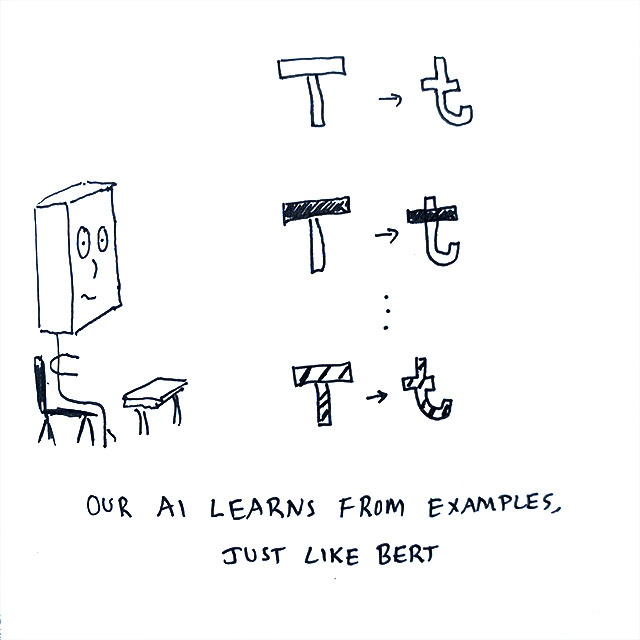

The first way a machine learns was inspired by teaching from an educated supervisor. We give the AI concrete examples. We show an input tensor, the output tensor we expect, then ask the AI to guess. This is called supervised learning.

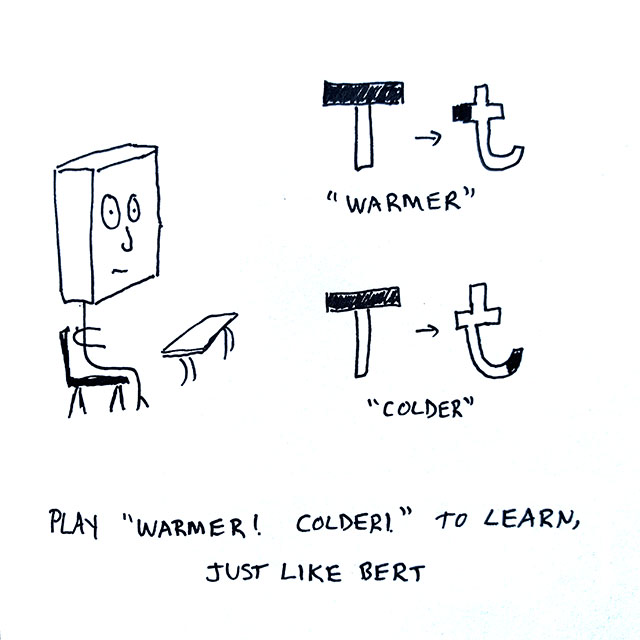

Machines also learn by playing a variant of “Warmer, Colder!”. If an AI guesses close to the answer, we say they’re “warmer” and the AI keeps moving forward. If the AI guesses farther from the answer, we say they’re “colder” and the AI changes direction. This is called reinforcement learning.

What? Were you expecting something more exotic? Elementary school teaching, Warmer Colder, and Tensors? Is that all there is?

Yes. Well, pretty much.

Don’t forget, we’re basically a bag of water with a worm in the middle for energy, plus some gray jelly up top to avoid being eaten. We have some stiff carbon things holding us together, letting us move a bit better than a jellyfish. Humans have a long way to go, evolution-wise.

The Future

The tensor model is useful for understanding the latest AI research, too! Four simple ideas are propelling us forward into an exciting future:

-

Some researchers invent clever ways to represent the world with tensors.

-

Other scientists invent graphs, or ways to create graphs, for turning big tensors into small ones, or small ones into big ones.

-

Others invent neat ways to learn “w,” “b” and “fire.” More recently they’ve begun to learn the graph itself, too.

-

The last batch use tensors and graphs to do cool things.

Of course, there’s always the brave Monty Python-ish researcher pulling a fifth, which doesn’t fit my tensor model. Perhaps you’re the lone genius who will figure out what comes next after tensors! Your bucket is thus:

- “And now for something completely different.”

Conclusion

The tensor model of tensors in, tensors out, flowing through graphs, is a simple yet powerful technique to understand and build AI systems.

You’re probably wondering how exactly we can represent business, documents, web sites, mobile apps, voice assistants, customers, inventory, risk, driverless cars and more with this approach. How do we protect against hidden biases in our tensors? How do we make tensors that protects our privacy, in areas like medicine and finance?

I’ll be sharing future posts to help you through all that. I’ll also be using techniques that will feel just as easy as spreadsheet @macro’s, leveraging the most popular scripting languages of the Web.

Thanks for tuning in! If you enjoy this, please follow me on twitter, connect on LinkedIn, or give me a shout out. That way we can stay in touch through humanity’s incredible journey forward.